AI Compliance 2026: Every Regulation You Must Know Now

Here is a number that should stop you cold: 78% of business executives cannot pass an independent AI governance audit within 90 days — that is the finding from the Grant Thornton 2026 AI Impact Survey. Now consider the other side of that equation: 63% of organisations have already operationalised AI across critical business functions. The arithmetic is brutal. More than half of all companies running AI at scale are doing so in a governance vacuum, exposed to regulatory penalties, reputational damage, and the kind of institutional trust collapse that no model update can reverse.

This is not a future problem. Enforcement deadlines are no longer abstract. The EU AI Act’s high-risk obligations activate on August 2, 2026. US state laws are already in force. ISO/IEC 42001 is appearing in procurement contracts and cyber insurance requirements. The window to act is measured in weeks, not quarters.

This article delivers everything you need: every active or enforcing AI regulation in 2026, a practical eight-step compliance checklist, and the strategic argument for why governance is not a legal overhead — it is the condition that makes AI transformation possible. As we argued in depth at AI Transformation Is a Problem of Governance, Not Technology, the organisations failing at AI are not failing because their algorithms are weak. They are failing because accountability structures are absent.

Why 2026 Is the Year AI Compliance Became Mandatory

For the first two years of the enterprise AI wave — 2023 and 2024 — compliance was largely aspirational. Regulators signalled intent. Frameworks were published. Voluntary codes of conduct circulated. Enforcement, however, was minimal. That era is definitively over.

The shift happened in layers. The EU AI Act’s prohibited practices came into force in February 2025, followed by General Purpose AI model obligations in August 2025. The critical threshold — full enforcement of high-risk AI system obligations under Annex III — arrives on August 2, 2026. That is not a soft deadline. That is the date market surveillance authorities across EU member states gain full power to audit, demand corrective action, and levy fines.

In the United States, the picture is simultaneously more fragmented and more aggressive. Over 600 AI bills were introduced in state legislatures in Q1 2026 alone. New York’s RAISE Act took effect March 19, 2026, imposing transparency, safety, and reporting requirements on frontier model developers. The White House released its National Policy Framework for AI on March 20, 2026 — but Congress is still deliberating a federal approach, meaning state-level obligations remain the operative reality for most organisations.

The December 2025 Executive Order attempted federal preemption over state AI laws, and the DOJ established an AI Litigation Task Force in January 2026 to pursue that goal. But legal resolution remains uncertain. Building a compliance strategy on the assumption that federal preemption will arrive in time is a risk no responsible organisation should take.

Key insight: AI compliance in 2026 is no longer aspirational. It is an operational requirement with financial, legal, and reputational consequences for non-compliance.

The EU AI Act — Your August 2, 2026 Deadline Explained

The EU AI Act is the most consequential AI regulation in force today, and August 2, 2026 is the date that transforms it from a compliance framework into an enforcement reality. Here is what every organisation needs to understand before that date arrives.

What the August 2, 2026 Deadline Actually Means

This date marks the full enforcement of Annex III high-risk AI system obligations. From August 2, 2026, market surveillance authorities across EU member states can conduct audits, issue corrective orders, require system withdrawals, and levy fines — without any grace period or transition accommodation.

The financial exposure is substantial. Fines for prohibited AI practices reach up to €35 million or 7% of global annual turnover — whichever is higher. Violations related to high-risk AI systems carry fines up to €15 million or 3% of global turnover. Even providing misleading information to regulators can cost €7.5 million or 1% of turnover. For context, GDPR enforcement generated over €4 billion in fines between 2018 and 2025. The EU AI Act carries equivalent ambition.

The extraterritorial reach mirrors GDPR exactly. Any organisation — regardless of where it is headquartered — must comply if its AI systems operate within the EU or produce outputs affecting EU residents. If your AI touches EU users, you are in scope.

The Four Risk Tiers — Know Where Your Systems Land

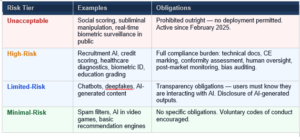

The EU AI Act uses a risk-based classification system. Every AI system your organisation deploys must be categorised. Here is the complete breakdown:

The practical implication is critical: most enterprise AI deployed today — in HR, finance, healthcare, and education — falls into the high-risk tier. Full compliance obligations are not optional for these systems.

Provider vs Deployer — Who Is Responsible for What

The EU AI Act distinguishes clearly between providers (organisations that build and place AI systems on the market) and deployers (organisations that use AI systems in their operations). The obligations differ significantly.

Providers carry the full compliance burden: technical documentation, CE marking, third-party conformity assessments for certain high-risk categories, post-market monitoring infrastructure, and incident reporting protocols.

Deployers must implement human oversight, maintain operational logs, monitor AI performance continuously, and ensure staff have sufficient AI literacy to operate systems responsibly.

Important: A proposal called the Digital Omnibus could delay some Annex III obligations to December 2027. Experts universally advise treating August 2, 2026 as the binding deadline — do not plan around a delay that has not yet been confirmed.

US AI Compliance in 2026 — The State Patchwork You Must Navigate

There is no single federal AI law in the United States. What exists instead is a rapidly expanding mosaic of state legislation, sector-specific regulatory guidance, and executive actions — with over 600 AI bills introduced in Q1 2026 alone. Navigating this landscape requires jurisdiction-specific attention rather than a single unified compliance strategy.

Colorado AI Act — The Most Comprehensive US State Law

Colorado’s AI Act is the most structurally comprehensive state-level AI law currently in force in the United States. It imposes risk management obligations, documentation requirements, and oversight mechanisms on developers and deployers of high-impact automated decision systems. The law targets AI used in consequential decisions — employment, housing, education, credit, healthcare — and requires documented impact assessments and consumer notification when AI substantially influences those decisions.

Critically, the Colorado AI Act is currently under revision. The Governor released a draft replacement bill in early 2026. Build compliance architecture that can adapt to the revised framework rather than hardwiring your processes to the current version.

California — A Layered Compliance Environment

California operates multiple parallel AI obligations simultaneously. The AI Transparency Act (SB 942), effective January 2026, requires watermarking of AI-generated content and disclosure when AI is used to produce synthetic media. CCPA automated decision-making risk assessments are now active, requiring documentation of how automated systems use personal data in consequential decisions. Full opt-out provisions from automated decision-making take effect in January 2027 — build the infrastructure now rather than scrambling at the next deadline.

New York RAISE Act — Effective March 19, 2026

The New York Responsible AI Safety and Education Act covers frontier AI model developers operating in or affecting New York residents. It imposes transparency, safety, compliance, and reporting requirements — and represents one of the most targeted state-level efforts to regulate the most capable AI models rather than just downstream applications. If your organisation develops or deploys frontier-scale AI, this law is immediately material.

Other States and Jurisdictions to Monitor

Illinois and New York City already mandate bias audits and human review for AI used in hiring decisions — NYC Local Law 144 has been active since 2023 and enforcement has accelerated through 2026.

Texas TRAIGA (effective January 2026) applies primarily to government use of AI with a narrower scope than Colorado — but signals Texas’s intent to regulate.

Utah and Washington have enacted GenAI disclosure requirements covering AI-generated content in consumer-facing communications.

Sector-specific federal frameworks add another compliance layer regardless of state law: HIPAA for healthcare AI, ECOA and the Fair Housing Act for credit and housing decisions, and EEOC guidance for employment AI.

Do not treat potential federal preemption as a compliance strategy. The risk of acting on an assumption that has not materialised outweighs the cost of building state-level compliance now.

ISO/IEC 42001 — The Global Compliance Standard Your Audit Needs

While regulatory timelines dominate headlines, ISO/IEC 42001 is the compliance standard that increasingly determines whether an organisation can credibly demonstrate AI governance to regulators, clients, insurers, and procurement partners. Many compliance strategies overlook it entirely. That is an increasingly costly oversight.

ISO/IEC 42001 is the international standard for AI Management Systems — the AI equivalent of ISO 27001 for cybersecurity. It provides a structured framework for AI policy, risk assessment, use case inventory, supplier obligations, and performance monitoring.A It is not merely a voluntary best-practice document. It is being actively referenced in state legislation, EU AI Act implementation guidance, and enterprise procurement contracts as the baseline for responsible AI governance.

Federal contractors face aligned expectations through the NIST AI Risk Management Framework, which maps closely to ISO/IEC 42001 principles. The Treasury Department’s February 2026 financial services AI framework translated the NIST AI RMF into 230 specific control objectives for financial institutions — making ISO/IEC 42001-aligned governance a practical necessity for the entire financial sector.

2026 development: Cyber insurance carriers are now requiring evidence of AI risk management framework adoption — including ISO/IEC 42001 alignment — as a prerequisite for AI liability coverage. These requirements are appearing as “AI Security Riders” in commercial policies.

Implementing ISO/IEC 42001 requires: a documented AI policy, a structured risk assessment process, a comprehensive AI use case inventory, defined supplier obligations for third-party AI, and continuous performance monitoring with evidence of outcomes. This aligns directly with what EU AI Act auditors will expect — and with what enterprise clients and insurers are now contractually demanding.

This connection between technical standards and governance infrastructure is exactly the argument developed in our full analysis at AI Transformation Is a Problem of Governance. Governance is not a compliance overlay applied on top of AI operations. It is the operational infrastructure through which AI creates durable, defensible value.

The AI Compliance Checklist — 8 Steps Before August 2026

Compliance is not a single document. It is an operational posture built through systematic action across every AI system in your organisation. These eight steps must be completed in order of logical dependency.

Step 1: Build Your AI Inventory

Map every AI tool in use — including SaaS integrations, third-party APIs, and shadow AI deployed without IT oversight. No organisation can be compliant about systems it does not know it has. Start with a structured discovery process that covers every business unit, not just the IT department. Shadow AI is the most common compliance gap organisations discover at this stage.

Step 2: Classify Every AI System by Risk Tier

Apply the EU AI Act’s risk logic to every system in your inventory. Label each as unacceptable, high-risk, limited-risk, or minimal-risk using the four-tier framework above. Assign compliance obligations tier by tier. This classification determines every subsequent step — it is the foundation on which everything else is built.

Step 3: Assign Clear, Named Ownership

Every high-risk AI system requires a named Business Owner, Technical Lead, and Data Steward. Ungoverned AI is non-compliant AI. Ownership means accountability for documentation, monitoring, and incident response — not just budget approval. If no one can be named as responsible for a high-risk system, that system cannot be compliantly deployed.

Step 4: Produce Technical Documentation

Under EU AI Act Articles 8–15, high-risk AI systems require technical documentation covering: architecture decisions, training data sourcing and quality, intended use cases and foreseeable misuse scenarios, human oversight mechanisms, and performance monitoring controls. This is the most time-intensive step. Begin immediately — it cannot be assembled in the weeks before an audit.

Step 5: Run Bias and Fairness Audits

High-risk AI systems require documented bias and fairness testing before deployment and on an ongoing basis. For US-based organisations, NYC Local Law 144 already mandates annual bias audits for AI used in employment decisions. Document all results formally. A verbal assurance that “the model is fair” is not an audit — and regulators will not treat it as one.

Step 6: Implement Human Oversight Mechanisms

High-risk AI under the EU AI Act must include a human in the loop — a defined, documented process through which a qualified person can review, override, or escalate AI decisions. Document the escalation path precisely: who intervenes, under what conditions, what gets logged, and how reversals are recorded. Vague commitments to “human oversight” do not meet the standard.

Step 7: Disclose AI Interactions to Users

Transparency obligations under the EU AI Act take full effect August 2026. California SB 942 is already active. Any chatbot, automated decision tool, or generative AI output presented to users must be identifiable as AI-generated. Audit every customer-facing and employee-facing AI touchpoint now and ensure disclosure mechanisms are live before the deadline.

Step 8: Build Post-Market Monitoring Infrastructure

Compliance is not a point-in-time audit. Build live monitoring infrastructure that tracks model drift, anomalous output patterns, bias metric changes, and incident logs continuously. Regulators expect ongoing evidence — not a snapshot assembled the week before an examination. This infrastructure is also your earliest warning system when something begins to go wrong.

The organisations that will pass their first audit are the ones that built compliance as an operational capability — not the ones that assembled documentation in the weeks before a deadline.

The Compliance Penalty Most Organisations Overlook — Trust

Every article about AI compliance covers fines. Fines are real, material, and well-documented. But the most damaging consequence of AI governance failure is not the fine — it is the organisational trust collapse that follows a visible AI failure.

When AI produces a biased outcome, takes an unauthorised action, or makes an unexplainable decision in a high-stakes context — and that failure becomes visible internally or publicly — the reaction is consistent and severe. Teams stop trusting the tools. Managers impose informal bans. Leaders become risk-averse about further AI investment. The transformation programme that took months to build stalls or reverses entirely.

This is not a hypothetical risk. It is the pattern observed across multiple enterprise AI deployments in 2024 and 2025. The organisations that recovered quickly were those with governance frameworks already in place — because governance meant they had evidence of what went wrong, a defined process for correcting it, and a credible commitment to responsible deployment that stakeholders could verify.

This is precisely the argument at the centre of AI Transformation Is a Problem of Governance, Not Technology. AI transformation does not fail because algorithms are weak. It fails because accountability structures are absent, risk ownership is unclear, and oversight mechanisms are designed as compliance theatre rather than operational infrastructure. Compliance, built correctly, is not a constraint on AI adoption — it is the condition that makes sustained, scalable AI adoption possible.

FAQ — AI Compliance 2026

What is the EU AI Act deadline in 2026?

August 2, 2026 is the enforcement date for high-risk AI systems under Annex III. From this date, market surveillance authorities can audit organisations, demand documentation, and issue fines of up to €35 million or 7% of global annual turnover for non-compliance.

Does the EU AI Act apply to companies outside Europe?

Yes. Like GDPR, the EU AI Act has extraterritorial reach. Any organisation — regardless of where it is headquartered — must comply if its AI systems operate within the EU or produce outputs affecting EU residents.

Is there a federal AI law in the United States in 2026?

No. The US has no single comprehensive federal AI law as of 2026. The Trump administration’s December 2025 Executive Order is attempting to preempt state laws, but compliance must still navigate a patchwork of 600+ state bills and sector-specific regulations.

What is a high-risk AI system under the EU AI Act?

A high-risk AI system is one that makes or substantially influences decisions affecting employment, credit, healthcare, housing, education, or legal outcomes. These systems face the strictest obligations: technical documentation, bias testing, human oversight, and conformity assessment before deployment.

What is ISO/IEC 42001 and do I need it?

ISO/IEC 42001 is the international standard for AI Management Systems — the AI equivalent of ISO 27001 for cybersecurity. It is referenced in state legislation, EU AI Act guidance, and increasingly required by enterprise procurement contracts and cyber insurance carriers as a baseline for responsible AI governance.

What happens if I don’t comply with the EU AI Act?

Fines for prohibited AI practices reach €35 million or 7% of global annual turnover. High-risk violations carry penalties up to €15 million or 3% of turnover. Providing misleading information to regulators carries fines up to €7.5 million or 1% of turnover — whichever figure is higher applies.

What is the Colorado AI Act?

Colorado’s AI Act is the most comprehensive US state AI law, targeting high-impact automated decision systems used in employment, credit, housing, education, and healthcare. It requires risk management documentation, impact assessments, and consumer notifications. A replacement bill is currently under review — monitor legislative updates closely.

Is AI transformation a governance problem or a technology problem?

Governance. In 2026, organisations are failing at AI transformation not because their models underperform, but because accountability structures, risk ownership, and oversight mechanisms are absent. The organisations that can demonstrate governance are the ones scaling AI successfully and sustainably.

Conclusion — Governance Is the Infrastructure AI Runs On

AI compliance in 2026 is not a legal checkbox. It is the operational infrastructure that determines whether an organisation can deploy AI at scale, sustain adoption through setbacks, pass the audits that clients and regulators will demand, and build the internal trust that makes ongoing AI investment worthwhile.

The regulations are no longer theoretical. The EU AI Act enforces on August 2, 2026 — months away, not years. US state laws are already active across Colorado, California, New York, Illinois, Texas, Utah, and Washington. ISO/IEC 42001 is appearing in insurance requirements and procurement contracts. The organisations that treat compliance as infrastructure now will hold a significant competitive advantage over those treating it as a last-minute project.

The eight steps in this article provide a practical starting point. But the deeper argument — the case for why governance is not a cost of AI deployment but the precondition for it — deserves a fuller treatment. For the complete strategic case for why AI transformation is fundamentally a governance challenge, and how to build the framework that resolves it, read our full analysis: AI Transformation Is a Problem of Governance, Not Technology.

The organisations that get this right in 2026 will not just be compliant. They will be the ones still scaling AI in 2030.